In order to get there, we’ve set our sights on a key milestone: a 100,000-qubit system by 2033. And now, we’re sponsoring and partnering on targeted research with the University of Tokyo and the University of Chicago to develop a system that would be capable of addressing some of the world’s most pressing problems that even the most advanced supercomputers of today may never be able to solve.

But why 100,000? At last year’s IBM Quantum SummitAt the IBM Quantum Summit 2022, the quantum community came together to witness the debut of the 433-qubit IBM Quantum Osprey processor — and to dive into the newest Qiskit Runtime capabilities that will accelerate research and development and build towards quantum-centric supercomputing. Read more., we demonstrated that we’d charted the paths forward to scaling quantum processors to thousands of qubits — but beyond that, the path is less clear.

Why? It’s a combination of footprint, cost, chip yield, energy, and supply chain challenges, to name a few. To ensure that these roadblocks don’t stop our progress, we must collaborate to do fundamental research across physics, engineering, and computer science.

Just as no single company is responsible for the current era of computing, now the world’s greatest institutions are coming together to tackle this problem to bring about this new era. We need the help of a broader quantum industry.

Scaling quantum computers

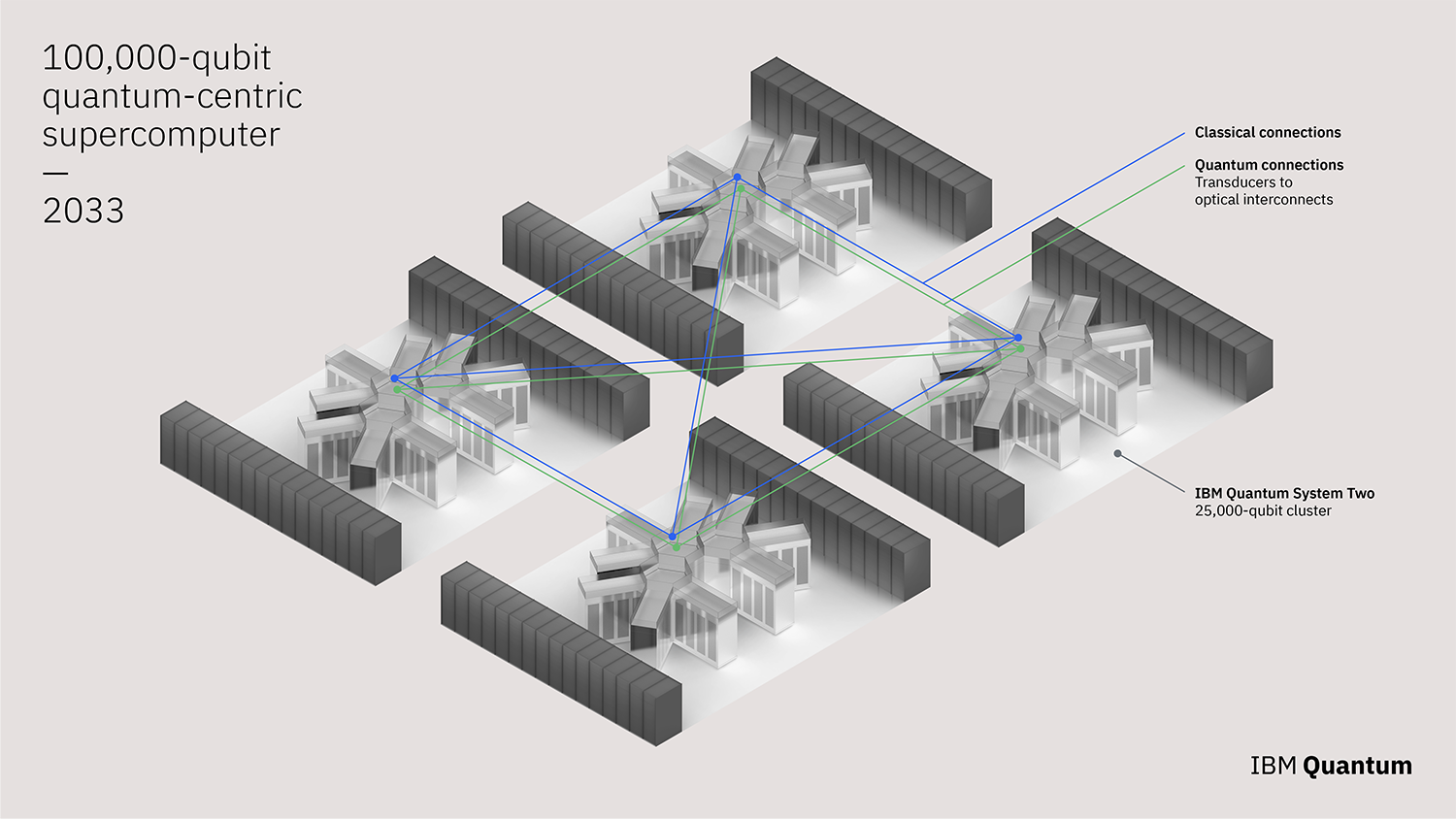

Last year, we released our answer for how we plan to scale quantum computers to a level where they can perform useful tasks. With that foundation set, we now see four key areas requiring further advancement in order to realize the 100,000-qubit supercomputer: Quantum communication, middleware for quantum, quantum algorithms and error correction (capable of using multiple quantum processors and quantum communication), and components with the necessary supply chain.

Pictured: A concept rendering of IBM Quantum’s 100,000-qubit quantum-centric supercomputer, expected to be deployed by 2033.

We’ll be sponsoring research at the University of Chicago and the University of Tokyo to advance each of these four areas.

The University of Tokyo will lead efforts to identify, scale, and run end-to-end demonstrations of quantum algorithms. They will also begin to develop and build the supply chain around new components required for such a large system including cryogenics, control electronics, and more. The University of Tokyo, too, has demonstrated leadership in these spaces; they helm the Quantum Innovation Initiative Consortium (QIIC), bringing together academia, government, and industry to develop quantum computing technology and building an ecosystem around it.

Through the IBM-UTokyo Lab, the university has already begun researching and developing algorithms and applications related to quantum computing, while laying the groundwork for the hardware and supply chain necessary to realize a computer at this scale.

Meanwhile, the University of Chicago will be leading efforts in bringing quantum communication to quantum computation, with classical and quantum parallelization plus quantum networks. They will also lead efforts to improve middleware for quantum, adding serverless quantum execution, circuit knittingCircuit knitting techniques allow for partitioning large quantum circuits into subcircuits that fit on smaller devices. Read more., and physics-informed error resilience so we can run programs across these systems.

The University of Chicago has already demonstrated a proven track record of leadership in quantum and quantum communication through the Chicago Quantum Exchange. The CQE operates a 124-mile quantum network over which to study long-range quantum communication. Additionally, many of the University of Chicago’s software techniques have helped provide structure to quantum software and influenced IBM’s and other industry middleware.

We recognize how challenging it will be to build a 100,000-qubit system. But we see the path before us — we have our list of known knowns and known unknowns. And if unforeseen challenges arise, we as an industry should be eager to take them on. We think that together with the University of Chicago and the University of Tokyo, 100,000 connected qubits is an achievable goal by 2033.

At IBM, we’ll continue following our development roadmap to realize quantum-centric supercomputing, while enabling the community to pursue progressive performance improvements. It means finding quantum advantages over classical processors, while treating quantum as one piece of a broader HPC paradigm with classical and quantum working as one computational unit. And with this holistic approach, plus our push toward the 100,000-qubit mark, we’re going to bring useful quantum computing to the world, together.