Watch "Quantum Utility: IBM Quantum and UC Berkeley experiment charts path to useful quantum computing" on YouTube.

For weeks, researchers at IBM Quantum and UC Berkeley were taking turns running increasingly complex physical simulations. Youngseok Kim and Andrew Eddins, scientists with IBM Quantum, would test them on the 127-qubit IBM Quantum Eagle processor. UC Berkeley’s Sajant Anand would attempt the same calculation using state-of-the-art classical approximation methods on supercomputers located at Lawrence Berkeley National Lab and Purdue University. They’d check each method against an exact brute-force classical calculation.

Eagle returned accurate answers every time. And watching how both computational paradigms performed as the simulations grew increasingly complex made both teams feel confident the quantum computer was still returning answers more accurate than the classical approximation methods, even in the regime beyond the capabilities of the brute force methods.

“The level of agreement between the quantum and classical computations on such large problems was pretty surprising to me personally,” said Eddins. “Hopefully it’s impressive to everyone.”

The two were collaborating to test whether today’s noisy, error-prone quantum computers were useful for calculating accurate results for certain kinds of problems. And today, they’ve published the results1 of that research on the cover of Nature. IBM Quantum and UC Berkeley have presented evidence that noisy quantum computers will be able to provide value sooner than expected, all thanks to advances in IBM Quantum hardware and the development of new error mitigation methods.

The journal Nature featured the article “Evidence for the use of quantum computing before fault tolerance” on the cover of their June 15, 2023 issue.

This work excites us for a lot of reasons. It’s a realistic scenario using currently available IBM Quantum processors to explore meaningful computations and realistic applications before the era of fault tolerance. Beyond just providing a proof of principle demonstration, we are delivering results accurate enough to be useful. The model of computation we explore with this work is a core facet of many algorithms designed for near-term quantum devices. And the sheer size of the circuits — 127 qubitsRead more about the progress made in improving the IBM Quantum Eagle processor’s performance over the last two years, here. running 60 steps’ worth of quantum gates — are some of the longest, most complex run successfully, yet.

And with the confidence that our systems are beginning to provide utility beyond classical methods alone, we can begin transitioning our fleet of quantum computers into one consisting solely of processors with 127 qubits or more. With this transition, all of our users will have access to systems like those used in this research.

With the confidence that our systems are beginning to provide utility beyond classical methods alone, we can begin transitioning our fleet of quantum computers into one consisting solely of processors with 127 qubits or more.

It’s important to note that this isn’t a claim that today’s quantum computers exceed the abilities of classical computers — other classical methods and specialized computers may soon return correct answers for the calculation we were testing. But that’s not the point. The continued back-and-forth of quantum running a complex circuit and classical computers verifying the quantum results will improve both classical and quantum, while providing users confidence in the abilities of near-term quantum computers.

“We can start to think of quantum computers as a tool for studying problems that would be hard to study otherwise,” said Sarah Sheldon, senior manager, Quantum Theory and Capabilities at IBM Quantum.

IBM Quantum's Youngseok Kim, co-author of “Evidence for the utility of quantum computing before fault tolerance,” and Sarah Sheldon, senior manager of Quantum Theory and Capabilities, IBM.

Learning what’s right by learning what’s wrong

Back in 2017, researchers on our IBM Quantum team announced a breakthrough: we simulated the energy of small molecules2 like lithium hydride and beryllium hydrideThis Nature cover story, “Hardware-efficient Variational Quantum Eigensolver for Small Molecules and Quantum Magnets,” showed how to implement a new quantum algorithm capable of efficiently computing the lowest energy state of small molecules. using quantum computers. These simulations were exciting — we did something with quantum computers. But they were nowhere close to the accuracy or size that chemists cared about, due to the noise in our systems. And that was okay. The real breakthrough was that we were getting an idea of why these simulations were wrong.

Around the same time, the team published a theory paper3 that set an important signpost for us. If we could really understand what’s causing the noise, then we could potentially undo its effects. And then maybe we could extract useful information from noisy quantum computers for certain kinds of problems.

The team started playing around and realized that we could amplify the effects of the noise using the same techniques we use to control our qubits through a technique called pulse stretching. Essentially, if we increased the amount of time it took to run individual operations on each qubit, then we’d scale the amount of noise by the same factor.

Pulse stretching allowed the team to dramatically improve the accuracy of our LiH simulations4 with four qubits in 2019. But a question remained: how far could these methods be scaled?

Extending these error-mitigated simulations to 26 qubits hinted to us that these methods could produce outcomes that are closer to the ideal answer than the approximations that could be efficiently obtained from a classical computer. This essentially laid out the blueprint5 for our current work. If we could enhance the scale and quality of our hardware, and develop ways to amplify the noise with greater control, perhaps we could estimate expectation values to a degree of accuracy where they could become useful for actual applications.

That breakthrough became the paper the team put on the arXiv in 2022: Probabilistic Error Cancellation,6 or PEC. We realized that we could assume, based on our knowledge of the machine, a basic noise model. Then, we could learn certain parameters to create a representative model of the noise. And, when repeating the same quantum computation many times, we could study the effect of either inserting new gates to nullify the noise — or to amplify the noise. This model scaled reasonably with the size of the quantum computer — modeling the noise of a big processor was no longer a herculean task.

Watch "Quantum Utility: How error mitigation makes quantum computers useful" on YouTube.

“The critical piece was being able to manipulate the noise beyond pulse stretching,” said Abhinav Kandala, manager, Quantum Capabilities and Demonstrations at IBM Quantum. “Once that began to work, we could do more complicated extrapolations that could suppress the bias from the noise in a way we weren’t able to do previously.”

This noise amplification was the final piece of the puzzle. With a representative noise model, one could manipulate and amplify the noise more accurately. Then we could apply classical post-processing to extrapolate down to what the calculation should look like without noise, using a method called Zero Noise Extrapolation, or ZNE.

In parallel, we know that error mitigation requires performant hardware. We had to keep pushing forward on scale, quality, and speed. With our 127-qubit IBM Quantum Eagle processors, we finally had systems capable of running circuits large enough to strain classical methods, with better coherence times and lower error rates than ever before. It was time to use error mitigation to put our state-of-the-art processors to the test.

This chart illustrates the basic principles of ZNE noise amplification, a method of error mitigation in quantum systems. With the ZNE technique, we amplify the noise in our system to different levels, and evaluate the noise at each level. Then, we combine the data from our evaluations with some extrapolation method that allows us to extrapolate back to the zero-noise limit.

Putting quantum to the test against classical

Error mitigation techniques like ZNE aren’t a panacea. Realizing the full potential of quantum computing will require we build redundancy into the system and allow multiple qubits to work together to correct one another, called error correction. However, through error mitigation, we realized that we found a way to produce certain kinds of accurate calculations before the era of full-scale error correction, even with noisy quantum computers. And these calculations could be useful.

We just needed to test that our error mitigation techniques actually worked. We started by running increasingly complex quantum calculations on our 127-qubit processorsFor more about these utility-scale quantum computing on IBM Cloud, read the IBM Cloud blog., and then checking our work with classical computers. But we’re a quantum computing company — we shouldn’t be the ones checking our own work with classical computers. We needed outside expertise to verify that these calculations were correct. So we drafted the help of graduate researcher Sajant Anand and associate professor Michael Zaletel from UC Berkeley, plus postdoctoral researcher Yantao Wu from RIKEN iTHEMS and based at UC Berkeley, who were introduced to the team by a former IBM intern.

“IBM asked our group if we would be interested in taking the project on, knowing that our group specialized in the computational tools necessary for this kind of experiment,” Anand said. “I thought it was an interesting project, at first, but I didn’t expect the results to turn out the way they did.”

There are several ways you can run quantum circuits with classical computers. The first are “brute force” methods that calculate the expectation value similar to how a physics student would calculate the expectation value by hand. This requires first writing all of the information about the wavefunction into a list, and then creating a grid of numbers (also known as a matrix) to perform the calculation.

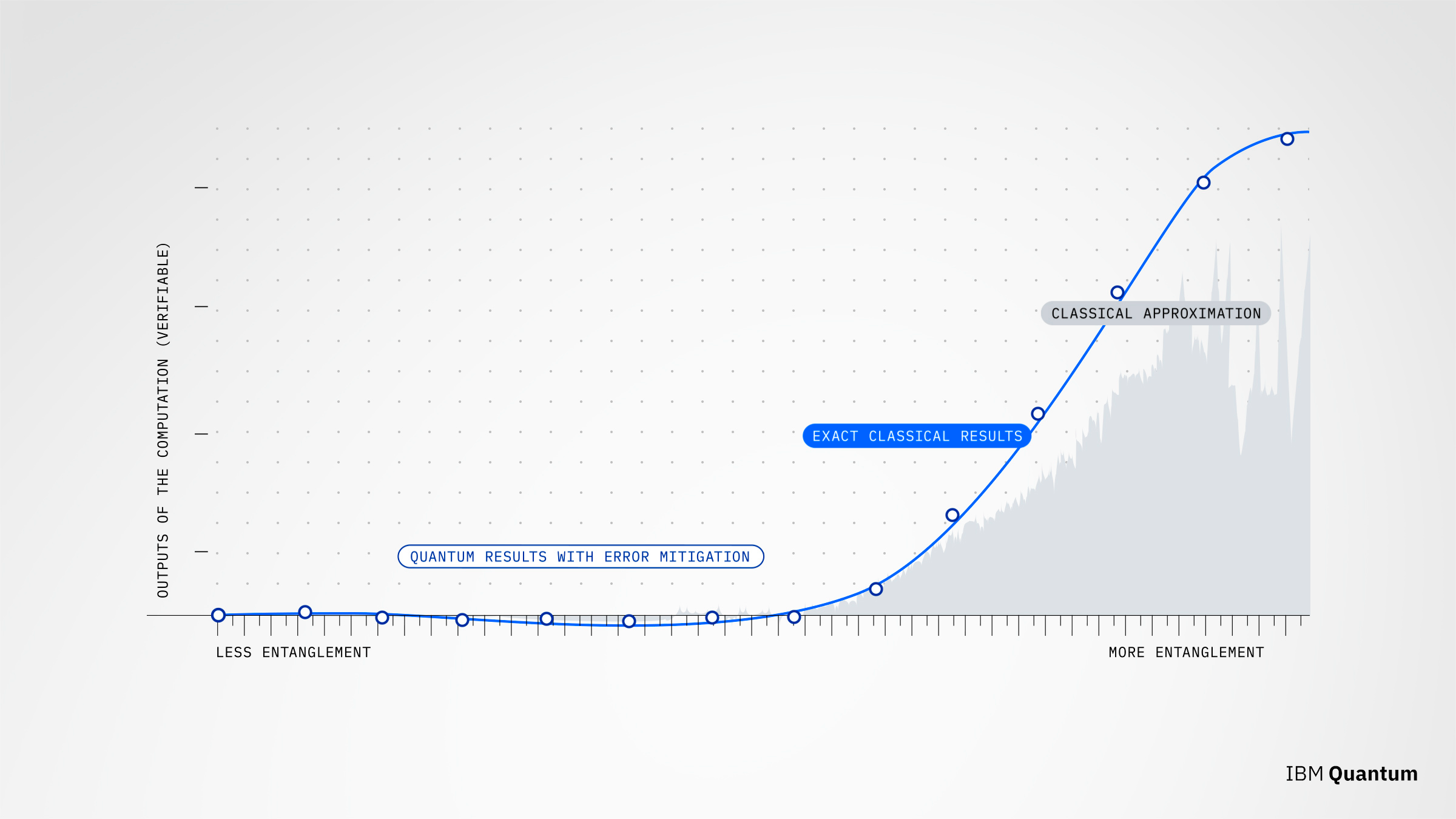

This chart shows the performance of quantum computer, versus state-of-the-art classical approximation methods compared to the exact classical “brute force” method for a series of increasingly challenging computational problems.

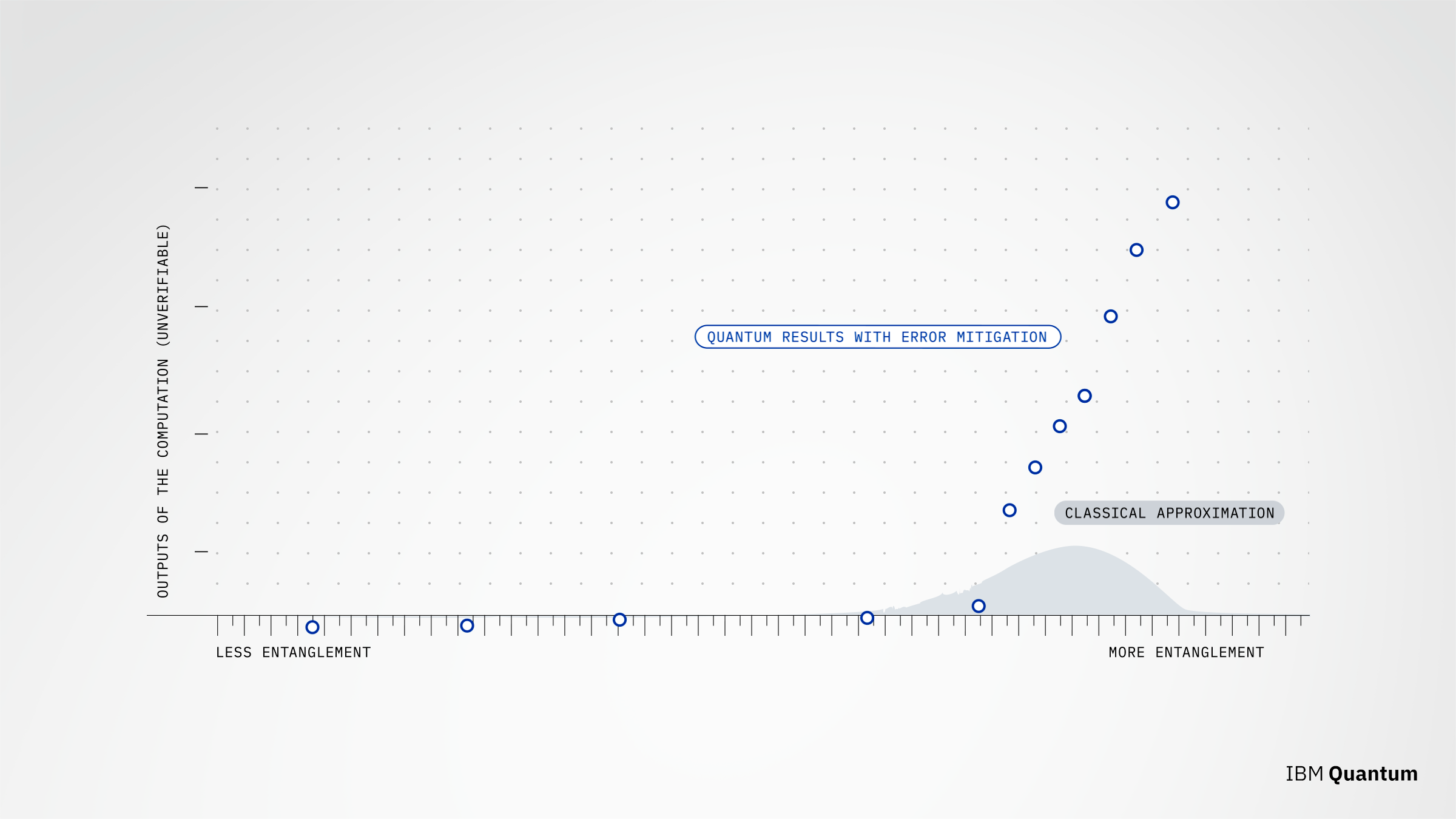

This chart compares the performance of the quantum computer versus classical approximation methods in the regime beyond the abilities of the exact classical “brute force” methods.

These methods grow twice as difficult for every additional qubit, and therefore, eventually can’t capture the complexity of big enough circuits. But for a small subset of quantum circuits, there are tricks that let us use the brute-force calculation to calculate exact answers, even if the circuit uses all 127 of IBM Quantum Eagle’s qubits. We’d start by using these circuits and methods to benchmark both the classical and quantum methods.

To handle more complex circuits, the Berkeley team used methods that approximate the wavefunction with fewer numbers using two different tensor network state (TNS) methods. These classical approximation methods try to represent many-qubit quantum states as networks of tensors — a more complex way to organize many numbers. Tensor network state methods come with a set of instructions on how to compute with that data and how to take all of that data and recover specific information about the quantum state from it, like the expectation value. These methods are kind of like image compression, getting rid of less-necessary information for the sake of keeping only what’s required to get accurate answers when limited by computational power and space. They keep working even after the brute force methods have failed.

The experiment would go as follows. We’d use all 127 qubits of our IBM Quantum Eagle processor to simulate the changing behavior of a system that naturally maps to a quantum computer, called the quantum Ising model. Ising models are simplifications of nature that represent interacting atoms as a lattice of quantum two-choice systems in an energy field. These systems look a lot like the two-state qubits that make up our quantum computers, making them a good fit for testing the abilities of our methods. We would use ZNE to try and accurately calculate a property of the system called the average magnetization. This is an expectation value — essentially a weighted average of the possible outcomes of the circuit.

Simultaneously, the UC Berkeley team would attempt to simulate the same system using tensor network methods with the help of advanced supercomputers located at Lawrence Berkeley National Lab’s National Energy Research Scientific Computing Center (NERSC) and at Purdue University. Specifically, the computations were partly run on NERSC’s “Cori” supercomputer, partly on the Lawrence Berkeley National Labs internal “Lawrencium” cluster, and partly on Purdue’s NSF-funded Anvil supercomputer. We would then compare the two against the exact methods and see how either did.

The trials meant turning multiple knobs. We could run circuits with more qubits, with more quantum gates, or with more complex entanglement. We could also ask to calculate higher-weight observables — the weight is a measure of how many qubits we’re interested in measuring at the end of the calculation, where lower weight means you’re interested in fewer qubits, and higher weight means you’re interested in more qubits.

The quantum methods continued to agree with the exact methods. But the classical approximation methods started to falter as the difficulty was turned up.

Finally, we asked both computers to run calculations beyond what could be calculated exactly — and the quantum returned an answer we were more confident to be correct. And while we can’t prove whether that answer was actually correct, Eagle’s success on the previous runs of the experiment gave us confidence that they were.

Finally, we asked both computers to run calculations beyond what could be calculated exactly, and the quantum returned an answer we were more confident to be correct.

Bringing useful quantum computing to the world

The field mostly agrees that realizing the full potential of quantum computers, like running Shor’s algorithm for factoring large numbers into primes, will require error correction. IBM devotes a lot of research energy to advancing error correction. However, there is debate over whether near-term quantum hardware can provide a computational advantage for useful problems before the full realization of error correction. We think it canWith fault tolerance the ultimate goal, error mitigation is the path that gets quantum computing to usefulness. Read more., and this paper gives us good reason to believe that noisy quantum computers will be able to provide value before the era of fault tolerance — including processors available today.

“The crux of the work is that we can now use all 127 of Eagle’s qubits to run a pretty sizable and deep circuit — and the numbers come out correct,” said Kristan Temme, principle research staff member and manager for the Theory of Quantum Algorithms group at IBM Quantum.

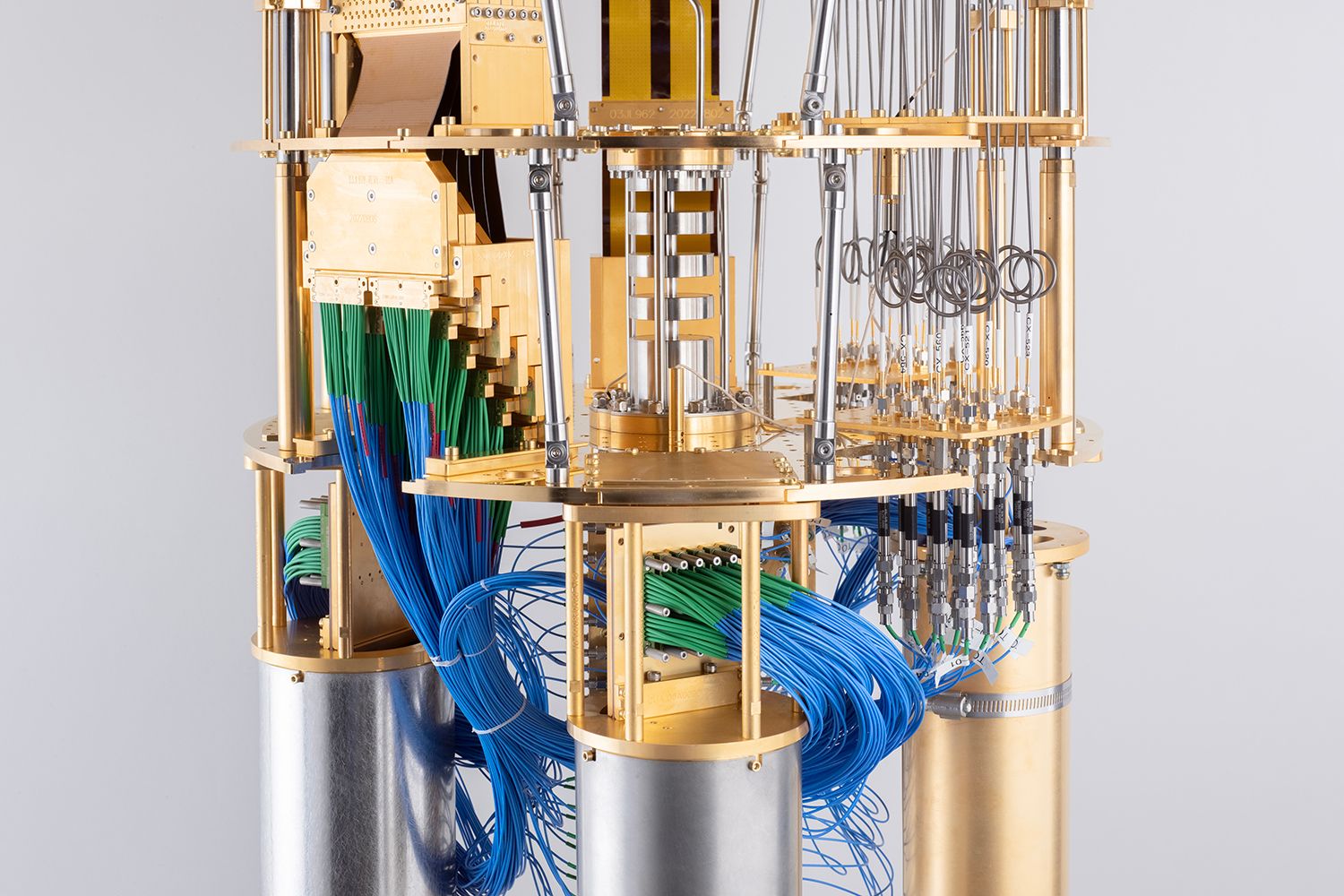

The interior view of the cryostat that cools the IBM Quantum Eagle, a utility-scale quantum processor at 127 qubits. Utility scale is a point at which quantum computers could serve as a scientific tool to explore a new scale of problems that classical methods may not be able to solve.

This paper is a data point showing that we’re entering the era of quantum advantage. We have long said that quantum advantage will be a continuous path, requiring two things:

- First, we must demonstrate that a quantum computer can outperform a classical computer.

- Second, we must find problems for which that speedup is useful and figure out how to map them onto qubits.

This paper has us straddling that first point; while we showed a quantum algorithm outperforming leading classical methods, we fully expect that the classical computing community will develop methods that verify the results we presented. And then we’ll run even harder calculations. This back-and-forth is exciting for us, because it’s making computing better overall.

“It immediately points out the need for new classical methods,” said Anand. And they’re already looking into those methods. “Now, we’re asking if we can take the same error mitigation concept and apply it to classical tensor network simulations to see if we can get better classical results.”

Meanwhile, for the quantum researchers, “this was kind of a learning process,” said Kim. “How do we optimize our calibration strategy to run quantum circuits like these? What can we expect, and what do we need to do to improve things for the future? These were nice things to find along the way as we were running the project.”

This research is exciting for us because not only did classical computers prove that our complex quantum simulations were accurate, but the approximate, scalable classical simulation methods produced results whose accuracy we were less confident in than the quantum computer’s.

“This work gives us the ability to maybe use a quantum computer as a verification tool for the classical algorithms,” said Anand. “It’s flipping the script on what’s usually done.”

While we can’t prove the quantum answers were correct for the most advanced circuits we tried, we’re building confidence that quantum computers were providing value beyond classical computers for this problem.

We’re confident enough in these systems plus error mitigation that we plan to upgrade our fleet to focus on processors with 127 qubits or more in the coming years. The transition means that all of our users will be able to explore applications on systems that can outperform today's state-of-the-art classical methods. Even our open plan users will have time-limited access to 127 qubit systems. We want all IBM Quantum users to be able to run circuits like those used in this paper.

Our IBM Quantum System One installations at the University of Tokyo, Fraunhofer Institute, and Cleveland Clinic, and the in-progress installations at Yonsei University, Fundación Ikerbasque, and Quebec will all soon have 127-qubit IBM Quantum Eagle processors so that they can explore the era of utility with us, too.

This is an important moment for the quantum community. As quantum begins providing utility, we think it is now ready for exploration by a new set of users — those who are using high-performance computing to solve hard problems, such as those shown in this paper. We’re keen on hearing their feedback. All the while, we are also forming working groups around this exciting news, collaborations between IBM and our partners to research use cases for near-term quantum processors in domains like healthcare and life sciences or machine learning.

And we encourage you to explore error-mitigated circuits incorporating 127 qubits or more as we prepare to bring you processors capable of returning accurate expectation values for 100-qubit-by-100 gate-depth circuits in less than a day’s runtime by the end of 2024.

Bringing useful quantum computing to the world requires all of us working together. We’re excited to see how this work will inspire developers, the IBM Quantum Network, and the broader quantum community to continue pushing the field forward.

Watch "Quantum Error Mitigation and the Path to Useful Quantum Computing" on YouTube.

Computational scientists

S. Anand’s and M. Zaletel’s work was supported by the U.S. Department of Energy, Office of Science, Basic Energy Sciences, under Early Career Award No. DE-SC0022716. Y. Wu’s work was supported by the RIKEN iTHEMS fellowship.