Technical Blog Post

Abstract

Scaling the Docker Bridge Network.

Body

Scaling the Docker Bridge Network – By David J. Wilder, IBM Linux Technology Center

IBM has boasted that 10,000 docker containers can simultaneously be spun-up on a single IBM Power8 host. You can read more about this accomplishment here:

www.ibm.com/blogs/bluemix/2015/11/docker-insane-scale-on-ibm-power-systems

Spinning up 10,000 containers is indeed a big accomplishment; however, to be useful containers need network connectivity . Can Docker's virtual network scale to 10,000 endpoints? I conducted experiments to explore this question. I found that when network activity is pushed to multiple thousands of containers I started seeing bottlenecks in the Linux kernel resulting in dropped packets and unreliable throughput. In this post I will discuss the issues I encountered when scaling the Bridge network and the solutions I implemented.

Docker's bridge network is built using the Linux bridge. The Linux bridge emulates a layer 2 network supporting the sending of unicast, multicast and broadcast messages between its nodes. I found the major obstacle in scaling a bridge is the handling of broadcast packets. In a physical network such as Ethernet a broadcast packet is placed on the wire once and is available to each node to receive or discard. To emulate this behavior the Linux bridge must deliver broadcast packets to every port of the bridge. This is accomplished by cloning each broadcast packet and flooding a copy to every endpoint. If a bridge has 1024 ports (the default maximum bridge size) one broadcast packets must be process by the kernel 1024 times. The default size of the kernels network ingress queue is 1000 therefor we need to increase this queue size or some packets will be dropped. Scaling to 10,000 ports requires increasing the ingress queue size a lot! However increasing the ingress queue size comes with a potential cost of increased latency.

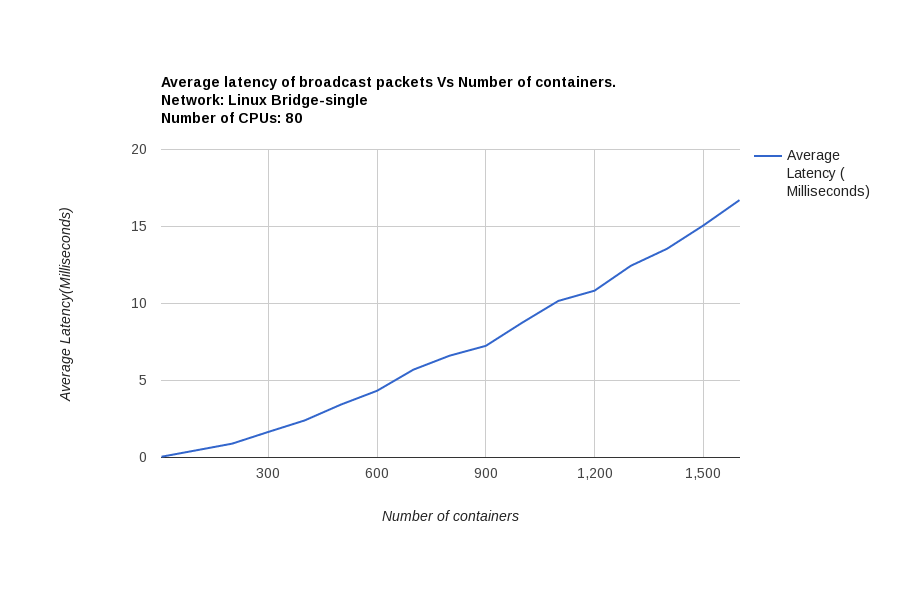

To illustrate the impact of broadcast packets on latency I measured packet latency as I increased the number of containers participating in the network. I injected broadcast packets in to the network measuring the average time a packet spent in the kernel. As is evident in the following chart the average latency of broadcast packets increased linearly with number of nodes in the network. Further experiments demonstrated that the majority of the observed latency was due to network packets waiting on the network ingress queue.

The ebtables utility enables basic Ethernet frame filtering on a Linux bridge it can be used to manage broadcast packet flow by restricting the use of broadcasts to a limited number of containers. A suitable ebtable rule can easy be implement to fit the needs of your deployment. Two approaches can be used: restrict the sending of broadcast packets to select containers, or allow only select containers to receive broadcast packets. The choice of approaches is determined by how broadcast packets are used in your environment. Using either approach judicially will significantly reduce the impact of broadcast packets allowing for larger networks and higher throughput. Unfortunately there is an catch with this approach.

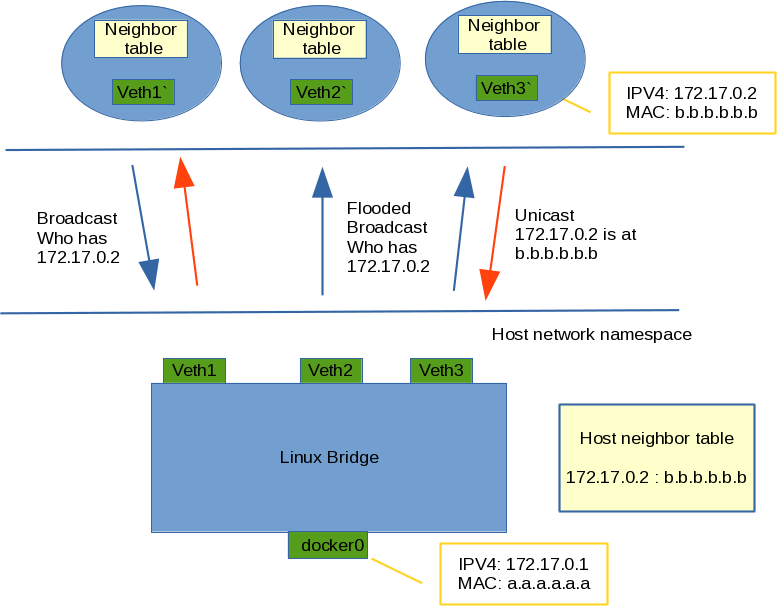

The bridge network is dependent on neighbor discovery and the the ARP protocol. If ebtables is configured to block all broadcasts, including ARP broadcasts, our bridge would no longer function. An ebtables rule might be configured to allow ARP broadcast packets to pass through; however, I found that ARP broadcast packets in a large network can create a significant overhead on the kernel. Diagram 1 depicts a docker bridge network. Each container participating in bridge network has its own neighbor table. Additionally the host has single neighbor table. Before ipv4 network traffic (other than broadcast traffic) can pass between containers (or from container to host) the container (or host) must create a neighbor table entry mapping the destination IPv4 address to the destinations node's media access control address (MAC address). This is accomplished using the ARP protocol.

Diagram 1

Neighbor tables are populated by broadcasting ARP who-has packets, these packets will be cloned creating multiple pointers to the packet and flooded to all ports of the bridge. The kernel must process all of these clones. The intended destination container (or the host) will respond with a unicast ARP reply, the remaining clones will be discarded by the kernel. In a 10,000 node network, for a single container to fully populated its neighbor table the container will send individual Arp who-has broadcasts to all 10,000 containers, the bridge will clone and flood each packet to every container (and the host) the kernel must therefor process 100,000,000 broadcast packets! This can cause the kernel's network ingress queues to overflow resulting in packet drops and connection establishment timeouts. I only had to scale to a few thousand containers to encounter these issues. Spreading the containers across multiple bridge networks reduces the impact but won't eliminate the problem.

The ARP protocol was original developed to allow a physical host to discover MAC address of other physical hosts. It strikes me as odd that docker containers continue to use ARPs for container communications on a single host given the IPv4 to MAC address mapping is already known by docker. Unfortunately docker is currently not sharing the data this with the kernel.

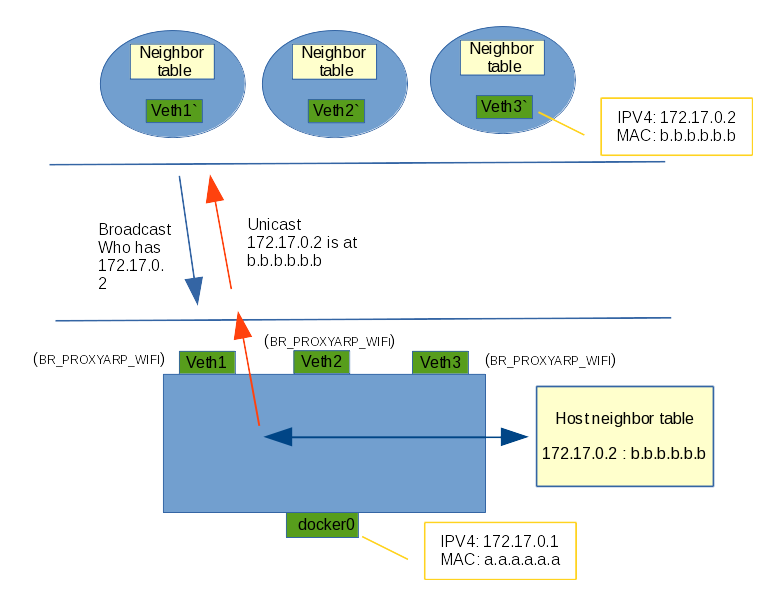

We need a way to reduce the impact of ARP protocol. Luckily a solution is at hand with the Linux Bridge proxarp feature (1). When enabled the bridge will act as a proxy, directly answering arp who-has requests thus eliminating the need to flood these packets to every port on the bridge. To implement the bridge proxy arp feature three things must occur when creating containers:

1) Set the BR_PROXYARP_WIFI flag on the container's bridge port to disable ARP packet flooding.

2) Generate a permanent arp entry in the host's ARP table mapping the containers IP address to its MAC address.

3) Generate a static FDB entry for the containers MAC address. The FDB table is used to route packets through the bridge.

Diagram 2

Diagram 2 illustrates how this works. When an arp who-has enters the bridge the host's neighbor table is consulted, if a matching entry is found and the target endpoint has BR_PROXYARP_WIFI set the bridge will directly generate and send the reply eliminating the need to flood the packet.

I have implement the BR_PROXYARP_WIFI feature in Docker's libnetwork project. I hope to see this merged for a future release. When available, the proxarp feature is enabled when creating a docker network by specifying com.docker.network.bridge.proxyarp=1. For example: docker network create --opt com.docker.network.bridge.proxyarp=1 <name>

When proxyarp is enabled Docker will set the BR_PROXYARP_WIFI flag when attaching a container to the bridge, generate a permanent ARP entry in the host's neighbor table and create a FDB entry mapping the bridge port the the containers MAC.

This solution prevents the flooding ARP-who-has packets for container to container traffic, however traffic between containers and the bridge's intrinsic interface (shown as docker0 in the diagram) will still result in some ARP packet flooding. This is because the BR_PROXYARP_WIFI flag can't be set on the bridge's intrinsic interface. I hope to introduce a kernel change to eliminate this issue in the future. In the meantime adding a permanent arp entry in the container's network name space for the bridge's intrinsic interface will workaround the problem.

In this discussion I have suggested a two prong approach to managing broadcast traffic in a scaled bridge network: The use of ebtables to limit the use of broadcasts to specific containers (2) and enabling BR_PROXYARP_WIFI to eliminate the flooding ARP packets. Combining these two techniques significantly reduces the impact of processing broadcast packets and allows for larger scale bridge based network to preform.

(1 ) The BR_PROXYARP features was originally introduced in the V3.19 linux kernel. The features had a side effect of limiting the propagation of any broadcast packets injected into bridge ports that enabled BR_PROXYARP. This feature was later extended by Jouni Malinen adding support for IEEE 802.11 Proxy ARP with an optional set of rules that are needed to meet the IEEE 802.11 and Hotspot 2.0 requirements for ProxyARP. The previously added BR_PROXYARP behavior was left as-is and a new BR_PROXYARP_WIFI alternative was added. I chose to use BR_PROXYARP_WIFI for my implementation as it allowed for non-APP broadcast traffic to be controlled with ebtables. The BR_PROXYARP_WIFI variant has been available since the V4.1 kernel.

(2) Here is an example script demonstrating how ebtables can be used to manage broadcasts packets.

#!/bin/bash

usage() {

echo "Usage: ManageBroadcasts [reset | allow <container> | disallow <container> | list]"

exit 0

}

error() {

echo ERROR: $@

exit 1

}

[ `id -u` != 0 ] && error you must be root to run this script!

case $1 in

r*)

echo -n Flushing and restarting ebtables. Type any key to continue.; read junk

ebtables -F

ebtables -X ALLOW-BROADCAST &> /dev/null

ebtables -P FORWARD DROP; [ $? != 0 ] && error setting pollicy.

ebtables -I FORWARD -d ! Broadcast -j ACCEPT; [ $? != 0 ] && error creating new rule.

ebtables -N ALLOW-BROADCAST; [ $? != 0 ] && error creating user-defined chain.

ebtables -A FORWARD -j ALLOW-BROADCAST; [ $? != 0 ] && error setting jump.

echo Done.

;;

a*)

[ $# != 2 ] && usage

MAC=`docker inspect --format='{{ .NetworkSettings.Networks.bridge.MacAddress }}' $2`

[ $? == 1 ] && error Unable to find container $2.

ebtables -L ALLOW-BROADCAST --Lmac2 | grep -q $MAC

[ $? == 0 ] && error "Container $2 ($MAC) is already allowed to send broadcasts."

ebtables -A ALLOW-BROADCAST -s $MAC -j ACCEPT

[ $? != 0 ] && error Unable to add $MAC.

echo "Container $2 ($MAC) is allowed to send broadcasts."

;;

d*)

[ $# != 2 ] && usage

MAC=`docker inspect --format='{{ .NetworkSettings.Networks.bridge.MacAddress }}' $2`

[ $? == 1 ] && error Unable to find container $2.

ebtables -D ALLOW-BROADCAST -s $MAC -j ACCEPT

[ $? != 0 ] && error "Unable to remove $2 ($MAC), possibly $2 was not in the list."

echo "Container $2 ($MAC) is now disallowed from sending broadcasts."

;;

l*)

ebtables -L ALLOW-BROADCAST

;;

*)

usage

;;

esac

UID

ibm16169923