How To

Summary

An IBM i Network File System (NFS) client can perform save and restore operations that use a virtual optical device type 632B model 003 that uses image files on an NFS server. The NFS server can be configured on an IBM i or another platform that supports NFS. Special setup is required in order to use an NFS server with the IBM i save and restore interfaces.

The intent of this document is to provide setup instructions for an IBM i system so that virtual media stored on an NFS server can be used during basic IBM i save and restore operations. This document does not describe or support complete system backup and recovery that uses media stored on an NFS server. The media created that use these instructions cannot be used to perform an IBM i installation (D-mode IPL). NFS backed optical devices are not recommended as primary backup devices. Use physical tape drives, VTLs, DVDs, or RDX drives to store important or long-term data.

Notes:

1. Data transferred that uses the virtual optical device is not encrypted. To add encryption you would need to set up an encrypted tunnel, such as a VPN.

2. *PUBLIC needs RW authority to the image files on the IBM i server so the data is not secure on the serving system.

3. Performance of the NFS backed optical device is limited by the network performance, traffic, and distance. As such, this device is the lowest performing save or restore device. Save and restore performance can vary at times as network performance and traffic vary.

4. If the NFS Server is something other than an IBM i, IBM does not support, or know the process required to perform exports or change authority on the NFS Server. It is assumed the customer or their NFS Server Support team knows the required process.

Steps

NFS server requirements

To share virtual optical images through an NFS server, the server must meet the following requirements:

- The NFS server must be Version 3. Other versions are not supported.

-The NFS server must support User Datagram Protocol (UDP). Note: This is requirement only applies when the IBM i NFS client is running IBM i 7.5 or earlier. IBM i 7.6 NFS clients support Transmission Control Protocol (TCP).

- Images must be created on the NFS server before running a save on an IBM i client. Save operations cannot dynamically create new images or dynamically extend the size of existing images, so some understanding of the size of the save operation is required.

- A volume list file must be created on the NFS server that contains a list of images to be used by an IBM i client. The volume list file must have the following characteristics:

- The file must be located in the same NFS server directory as the image files.

- The file must be named VOLUME_LIST.

- Each entry in the file is either an image file name with access intent or a comment.

- Image file names are limited to 127 characters.

- All characters following a hash symbol (#) are considered a comment and are ignored.

- The order of the image file names in the volume list file indicates the order the images are processed on the IBM i client.

- The file must contain ASCII characters

IBM i NFS client requirements

To use virtual optical images through an NFS server, the IBM i NFS client must meet the following requirements:

- The IBM i must have a Version 4 Internet Protocol (IP) address.

- During setup, the shared NFS server directory must be mounted over a directory on the IBM i client.

- Either an IBM i service tools server or a LAN console connection must be configured that uses a Version 4 IP address. For more information, see the 'Verify/configure the Service Tools Server' section or Configuring the service tools server for DST https://www.ibm.com/docs/en/i/7.4?topic=server-configuring-service-tools-dst.

- A 632B-003 virtual optical device must be created that uses the IP address of the NFS server.

Verify/configure the Service Tools Server

This configuration must be done for each IBM i client partition and before setting up the NFS Server and Client

The type of system and configuration determines what type of setup is required to configure the Service Tools Server.

If Operations Console with LAN Connectivity is configured, then there is no additional setup required. If Operations Console with LAN Connectivity is not configured, then a LAN adapter or IOP must be tagged, depending on the model of your system.

One way to help determine whether your client system has a service tools server for DST configured:

1. Log on to SST.

2. Select option 8, Work with service tools user IDs and Devices.

3. Press F5.

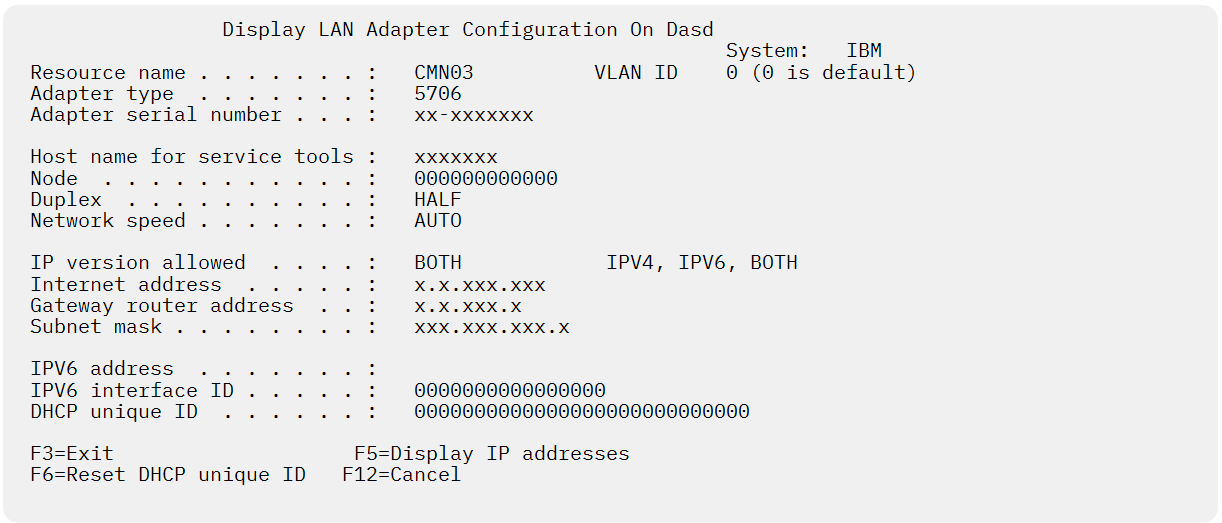

If a service tools server is configured, the following display is seen.

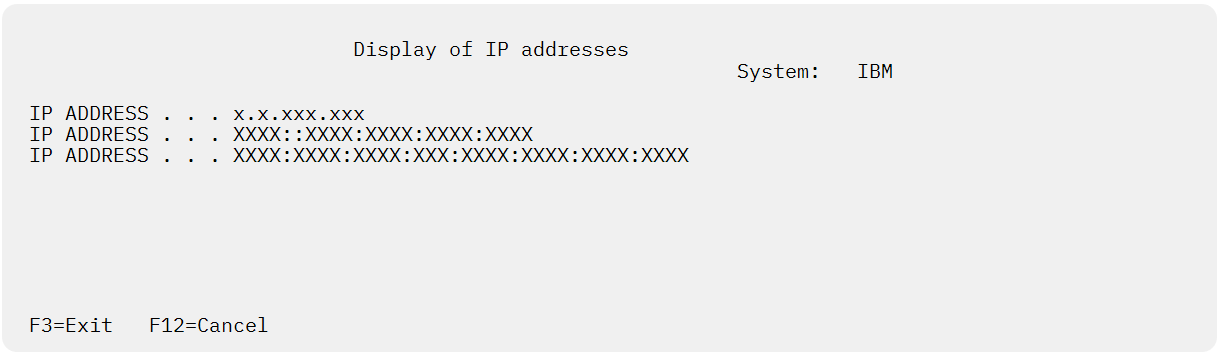

Pressing F5 displays the various IP addresses.

Note: If you do not see a valid IP address, then the service tools server is not configured. If you do see a valid IP address, this setup does not always indicate that the server is configured correctly.

Note: If you use the same port (for example, gigabit adapter) for the Service Tools Server that is used for TCP/IP, ensure that you end TCP/IP (ENDTCP) and vary off the TCP/IP line description before configuring the Service Tools Server (STS). Only gigabit adapters can be shared. To simplify the tagging process, PF13 allows selecting the resource and eliminates the need for having to temporarily configure Operations Console on the partition. The following steps describe how to select and configure the Service Tools Server(STS):

1. Start System Service Tools (STRSST).

2. Work with service tools user IDs and Devices (Option 8).

3. Select STS LAN adapter (F13) to see available adapters. If there are no available adapters that are listed and you plan to tag a gigabit adapter, press F21 to show all adapters.

4. Press Enter.

5. Enter the TCP/IP information. Have your network administrator provide you with a valid TCP/IP address when you enter this information.

6. Press F7 (Store).

7. Press F14 (Activate).

Note: DST LAN Console adapter needs a unique IP address. This IP address cannot be an IP address that is in use anywhere on your network.

NFS server setup

Setup of the NFS server is platform-specific, see the platform's NFS documentation. The NFS server needs to be active and have the following configuration:

- The directory (or a parent directory) that contains the virtual optical images must be exported with the following characteristics:

- Share with the following IP addresses:

1. IP address of the IBM i client.

2. IP address of the IBM i client service tools server or the LAN console connection.

- Share for read and write.

Note: The example in this document assumes the image files are in a directory named /nfsexport/share01 on the NFS Server. It can be named differently but it is important to replace references to /nfsexport/share01 with the directory that contains the images on the NFS server.

IBM i NFS client setup

Set up the IBM i client to use virtual optical images stored on an NFS server. The command examples that follow assume that NFS server SERVER01 with IP address '1.2.3.4' exported directory /nfsexport/share01:

Note: 7.6 NFS clients will need to issue a STRNFSSVR *BIO command before running a STRNETINS command or Mounting over a remote NFS directory to create optical volumes via PASE.

1. Create a mount directory for the NFS server.

MKDIR DIR('/NFS')

MKDIR DIR('/NFS/SERVER01')

2. Mount the NFS server root directory over the IBM i mount directory.

MOUNT TYPE(*NFS) MFS('1.2.3.4:/nfsexport/share01') MNTOVRDIR('/NFS/SERVER01')

3. Create image information on the NFS server in the Portable Application Solutions Environment (PASE):

i. Enter PASE.

CALL PGM(QP2TERM)

ii. Create a directory to contain the virtual image files.

mkdir /NFS/SERVER01/iImages

iii. Change to the virtual image file directory.

cd /NFS/SERVER01/iImages

iv. Create image files. The number of images and the size of the images cannot be changed during a save operation so they must be created large enough to hold all the save data. This example creates 3 10GB images.

Note: Creating large files can take several minutes. Do not exit PASE until all the commands send a message that indicates they completed.

dd if=/dev/zero of=IMAGE01.ISO bs=1M count=10000

dd if=/dev/zero of=IMAGE02.ISO bs=1M count=10000

dd if=/dev/zero of=IMAGE03.ISO bs=1M count=10000

v. Create volume list file VOLUME_LIST. The W at the end of each line indicates that image allows write access.

rm VOLUME_LIST

echo 'IMAGE01.ISO W' >> VOLUME_LIST

echo 'IMAGE02.ISO W' >> VOLUME_LIST

echo 'IMAGE03.ISO W' >> VOLUME_LIST

Verify the list.

cat VOLUME_LIST

The cat output shows a line for each of the image file names with the corresponding access intent.

IMAGE01.ISO W

IMAGE02.ISO W

IMAGE03.ISO W

vi. Exit the PASE by pressing F3.

Note: This image creation process is unique and separate from the usage of the image files by the Virtual Optical Device. The Virtual Optical Device does not use the Mount Over directory used with PASE for the access of the image files. The Virtual Optical Device uses the native directory created on the NFS server. This directory must be exported to the Service Port IP address with the proper R/W permissions.

4. Create a device description for a virtual optical device.

CRTDEVOPT DEVD(NFSDEV01) RSRCNAME(*VRT) LCLINTNETA(*SRVLAN) RMTINTNETA('1.2.3.4') NETIMGDIR('/nfsexport/share01/iImages')

Note: Replace 1.2.3.4 with the IP address of the NFS server. Replace /nfsexport/share01/iImages with the directory on the NFS server that contains the volume images.

5. Vary on the virtual optical device. Note: If the images or VOLUME_LIST file is changed on the NFS server, the virtual optical device must be varied off, and then varied back on to use the virtual images.

VRYCFG CFGOBJ(NFSDEV01) CFGTYPE(*DEV) STATUS(*ON)

6. Use the device to initialize the image files as volumes.

LODIMGCLGE IMGCLG(*DEV) IMGCLGIDX(3) DEV(NFSDEV01)

INZOPT NEWVOL(IVOL03) DEV(NFSDEV01) CHECK(*NO)

LODIMGCLGE IMGCLG(*DEV) IMGCLGIDX(2) DEV(NFSDEV01)

INZOPT NEWVOL(IVOL02) DEV(NFSDEV01) CHECK(*NO)

LODIMGCLGE IMGCLG(*DEV) IMGCLGIDX(1) DEV(NFSDEV01)

INZOPT NEWVOL(IVOL01) DEV(NFSDEV01) CHECK(*NO)

7. Virtual device NFSDEV01 can now be used for native IBM i save and restore operations. Volume IVOL01 (image file '/nfsexport/share01/iImages/IMAGE01.ISO') is mounted on device NFSDEV01. To test the device, do the following to verify that a message queue can be saved and restored. Messages from the save and restore commands indicate that one object was saved and one object was restored.

CRTMSGQ MSGQ(QTEMP/MSGQ)

SAVOBJ OBJ(MSGQ) LIB(QTEMP) DEV(NFSDEV01) CLEAR(*ALL)

RSTOBJ OBJ(*ALL) SAVLIB(QTEMP) DEV(NFSDEV01)

The save contents of the virtual optical volume can also be displayed:

DSPOPT VOL(IVOL01) DEV(NFSDEV01) DATA(*SAVRST) PATH(*ALL)

Additional Information

Document Location

Worldwide

Was this topic helpful?

Document Information

Modified date:

30 March 2026

UID

ibm16220172