Upgrade the API Connect component in CP4I from

v10.0.1.1-eus or later to 10.0.1.7-eus.

Before you begin

If you are running API Connect v10.0.1.2-eus, your deployment

might be experiencing DNS errors in pgbouncer. Review the known issue to determine whether your

deployment is affected and if so, to replace the pgbouncer image before upgrading to API Connect

10.0.1.7-eus.

Known issue: Pgbouncer DNS error in v10.0.1.2-eus

Complete the

following steps to determine whether your deployment is experiencing DNS errors with pgbouncer, and

to replace the pgbouncer image if needed. This issue only affects API Connect v10.0.1.2-eus. If you

are running a different version of 10.0.1.x-eus, your deployment is not affected and you can proceed directly to the upgrade steps.

Determine whether your v10.0.1.2-eus deployment is experiencing the problem:

- Get the pgbouncer pod

name:

oc get pods -n <APIC_namespace> | grep 'pgbouncer'

where

<APIC_namespace> is the namespace where you installed API

Connect.

- Check the pgbouncer log for the

server DNS lookup failed error

message:oc logs <pgbouncer-pod-name> -n <APIC_namespace> | grep 'server DNS lookup failed'

If the

server DNS lookup failed message appears in the log, then your

deployment is impacted and you must replace the pgbouncer image. If other errors appear, this

procedure will not correct them and you should contact IBM Support for assistance. If no errors

appear, you can proceed directly to the

upgrade steps.

Replace the pgbouncer image:

- Get the new pgbouncer image format from the registry where

version 10.0.1.7-eus images were pushed; for example:

<registry-name>/ibm-apiconnect-management-crunchy-pgbouncer@sha256:4a5caaf4e5cd4056ccb3de7d39b8e343b0c4ebce7cae694ccbfbe80924d98752

For

CP4I, the default registry-name is

icr.

- Get the pgbouncer deployment

name:

oc get deploy -n <APIC_namespace> | grep 'pgbouncer'

- Edit the pgbouncer deployment:

oc edit deploy <pgbouncer-deploy-name> -n <APIC_namespace>

- In the deployment, replace the container image section with the new image that you

downloaded.

- Wait for the pgbouncer pod to restart.

- Exec into the pgbouncer

pod:

oc exec -it <pgbouncer-pod> -n <APIC_namespace> -- bash

- Execute

pgbouncer --version and make sure the response matches the following

information:bash-4.4$ pgbouncer --version

PgBouncer 1.15.0

libevent 2.1.8-stable

adns: evdns2

tls: OpenSSL 1.1.1g FIPS 21 Apr 2020

systemd: yes

- Verify that the

server DNS lookup failed no longer appears in the pgbouncer

log:oc logs <pgbouncer-pod-name> -n <APIC_namespace> | grep 'server DNS lookup failed'

- Delete back-end microservices to force a restart:

- Get the

apim microservices pod name: oc get pods -n

<APIC_namespace> | grep 'apim'

- Delete the

apim pod: oc delete pod <apim-pod> -n

<APIC_namespace>

- Get the

lur microservices pod name: oc get pods -n

<APIC_namespace> | grep 'lur'

- Delete the

lur pod: oc delete pod <lur-pod> -n

<APIC_namespace>

- Get the

task manager microservice pod name: oc get pods -n

<APIC_namespace> | grep 'task'

- Delete the

task manager pod: oc delete pod

<task-manager-pod> -n <APIC_namespace>

- Make sure the deployment is up and running before proceeding to upgrade to

10.0.1.7-eus.

About this task

If you already deployed the API Management capability for 2020.4.1

using API Connect 10.0.1.1-eus or later, you can upgrade to 10.0.1.7-eus by completing the following

steps.

Procedure

- Back up all certificates and issuers to a file by running the following command:

oc get --all-namespaces -oyaml issuer,clusterissuer,certificate,secret > backup.yaml

- Run the pre-upgrade health check:

- Verify that the

apicops utility is installed by running the following

command to check the current version of the utility:

- Run the following command to set the KUBECONFIG environment.

export KUBECONFIG=/<path>/kubeconfig

- Run the following command to execute the pre-upgrade

script:

apicops version:pre-upgrade -n <namespace>

If the system is healthy, the results will not include any errors.

-

If you use the Operations Dashboard with API management, complete the following steps to

upgrade the dashboard and the corresponding images used in API management:

-

Upgrade the Operations

Dashboard.

-

Update the image paths in the API Connect Cluster CR's

spec.gateway.openTracing section as explained in step 2 of Enabling open tracing for API management.

- Upgrade operators by completing the following steps:

Important: Upgrade operators in the specified sequence to ensure that all dependencies

are satisfied.

When you update operators, the behavior depends on whether you enabled automatic or manual

subscriptions for the operator channel:

- If you enabled Automatic subscriptions, the operator version will

automatically upgrade to if needed.

- If you enabled Manual subscriptions, and if operator channel is already

at the required version, then OpenShift UI (OLM) will notify you that an upgrade is available. You

must manually approve the upgrade.

- Upgrade the Platform

Navigator.

-

Update the DataPower operator channel to 1.2-eus, wait for the operator to update, for the pods

to restart, and for a

Ready status.

Note: There is a known issue on OpenShift 4.6.7 or higher that can cause the DataPower operator

v1.2.0 to fail to start. If the operator fails to start, uninstall the DataPower operator and update

to DataPower operator v1.2.1 or later. Version 1.2.1 (or later) of the operator fixes the

limitation, and will start.

In the next 2 steps, the IBM Cloud Pak foundational services operator and the API

Connect operator must be upgraded in tandem.

- Update the IBM Cloud Pak foundational services channel to v3, and update the operator to

3.19.

-

Immediately update the API Connect operator channel to 2.1.7-eus, and then wait for the

operator to update, for the pods to restart, and for a

Ready

status.

-

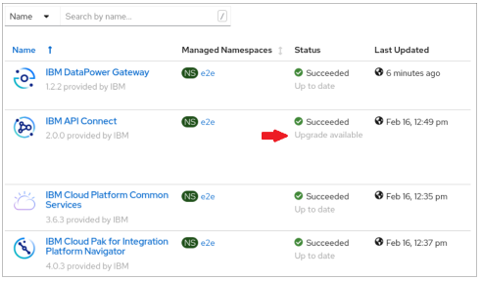

Verify that the IBM API Connect capability displays

Succeeded (green check

mark) in Platform Navigator.

Do not proceed until you see the Succeeded status.

Troubleshooting: If you encounter the following error condition during

the upgrade from a API Connect version earlier than 10.0.1.6-eus, complete the workaround steps to

patch the pgcluster CR, and then upgrade the API Connect operator again.

- Check for the following errors:

From the API Connect

operator:

upgrade cluster failed: Could not upgrade cluster: there exists an ongoing upgrade task: [minimum-mgmt-56616911-postgres-upgrade]. If you believe this is an error, try deleting this pgtask

{"level":"info","ts":1659638932.470165,"logger":"UpgradeCluster: ","msg":"Postgres DB version is less than pgoVersion. Performing upgrade ","pgoVersion: ":"4.7.4","postgresDBVersion: ":"4.5.2","clusterName":"minimum-mgmt-56616911-postgres"}

And

from the postgres

operator:

time="2022-08-04T22:05:42Z" level=error msg="Namespace Controller: error syncing Namespace 'cp4i': unsuccessful pgcluster version check: Pgcluster.crunchydata.com "minimum-mgmt-56616911-postgres" is invalid: spec.backrestStorageTypes: Invalid value: "null": spec.backrestStorageTypes in body must be of type array: "null""

The

errors are caused by a null value for the property in the pgcluster CR. The workaround is to patch

the pgcluster CR and correct the property.

remove the null

value for backrestStorageTypes: under spec, if found and add appropriate value

mentioned below. Or if backrestStorageTypes doesn't show up in pgcluster add the

below values according to the backup type used

- Get the pgcluster name and edit the

CR:

oc get pgcluster -n <APIC_namespace>

oc edit pgcluster <pgcluster_name> -n <APIC_namespace>

- Add a value for the

backrestStorageTypes in the spec:

section:Example for S3 backups:

backrestStorageTypes:

- s3

Example for local and SFTP

backups:

backrestStorageTypes:

- posix

- Save and apply the CR with the

wq command.

If you are upgrading from an API Connect release prior to 10.0.1.7-eus, complete steps

5, 6, and 7 to resolve certificate

issues (certificate manager was upgraded in API Connect 10.0.1.7-eus). Otherwise, skip to step 8.

- Check for certificate errors.

In Version 10.0.1.7-eus, API Connect upgraded its certificate manager, which might cause some

errors during the upgrade. Complete the following steps to check for certificate errors and correct

them.

- Check the new API Connect operator's log for an error similar to the following

example:

{"level":"error","ts":1634966113.8442025,"logger":"controllers.AnalyticsCluster","msg":"Failed to set owner reference on certificate request","analyticscluster":"apic/instance-name-a7s","certificate":"instance-name-a7s-ca","error":"Object apic/instance-name-a7s-ca is already owned by another Certificate controller instance-name-a7s-ca",

To correct this problem, delete all issuers and certificates generated with

certmanager.k8s.io/v1alpha1. For certificates used by route objects, you must also delete the route

and secret objects.

- Run the following commands to delete the issuers and certificates that were generated

with certmanager.k8s.io/v1alpha1:

oc delete issuers.certmanager.k8s.io instance-name-self-signed instance-name-ingress-issuer instance-name-mgmt-ca instance-name-a7s-ca instance-name-ptl-ca

oc delete certs.certmanager.k8s.io instance-name-ingress-ca instance-name-mgmt-ca instance-name-ptl-ca instance-name-a7s-ca

In the examples, instance-name is the instance name of the

top-level apiconnectcluster.

When you delete the issuers and certificates, the new certificate manager generates replacements;

this might take a few minutes.

- Verify that the new CA certs are refreshed and ready.

Run the following command to verify the certificates:

oc get certs instance-name-ingress-ca instance-name-mgmt-ca instance-name-ptl-ca instance-name-a7s-ca

The CA certs are ready when AGE is "new" and the READY column

shows True.

- Delete the remaining old certificates, routes, and secret objects.

Run the following commands:

oc get certs.certmanager.k8s.io | awk '/instance-name/{print $1}' | xargs oc delete certs.certmanager.k8s.io

oc delete certs.certmanager.k8s.io postgres-operator

oc get routes --no-headers -o custom-columns=":metadata.name" | grep ^instance-name- | xargs oc delete secrets

oc get routes --no-headers -o custom-columns=":metadata.name" | grep ^instance-name- | xargs oc delete routes

- Verify that no old issuers or certificates from your top-level instance remain.

Run the following commands:

oc get issuers.certmanager.k8s.io | grep instance-name

oc get certs.certmanager.k8s.io | grep instance-name

Both commands should report that no resources were found.

- Use the

apicops utility (version 0.10.57 or later) to

detect stale certificates and then delete them.

In rare cases, when new certificates are generated, some of them might be signed with the old CA

secret. The result is that the new certificates can't be verified. If you are upgrading from

10.0.1.6-ifix1-eus or older, the top-level API Connect CR might not show a ready/health state until

you delete all the secrets that failed to be verified. Using the apicops tool

ensures that you successfully detect all stale certificates.

- Verify that the OpenShift UI indicates that all operators are in the

Succeeded state without any warnings.

- Run the following command:

apicops upgrade:stale-certs -n <APIC_namespace>

- Delete any stale secrets for certificates that are managed by cert-manager.

If a certificate failed the verification and it is managed by cert-manager, you can delete the

stale certificate secret, and let cert-manager regenerate it. To delete the certificate secret, run

the following command:

oc delete secret <stale-secret> -n <APIC_namespace>

Note that you are deleting the secret and not the new certificate.

- If needed, delete the portal-www, portal-db and portal-nginx pods to

ensure they use the new secrets.

If you have the Developer Portal deployed, then the portal-www, portal-db and portal-nginx pods

might require deleting to ensure that they pick up the newly generated secrets when restarted. If

the pods are not showing as "ready" in a timely manner, then delete all the pods at the same time

(this will cause down time).

Run the following commands to get the name of the portal CR and delete the pods:

oc project <APIC_namespace>

oc get ptl

oc delete po -l app.kubernetes.io/instance=<name_of_portal_CR>

- If needed, renew the internal certificates for the analytics

subsystem.

If you see analytics-storage-* or analytics-mq-* pods in the CrashLoopBackOff

state, then renew the internal certificates for the analytics subsystem and force a restart of the

pods.

- Switch to the project/namespace where analytics is deployed and run the following

command to get the name of the analytics CR (AnalyticsCluster):

oc project <APIC_namespace>

oc get a7s

You need the CR name for the remaining steps.

- Renew the internal certificates (CA, client, and server) by running the following

commands:

oc get certificate <name_of_analytics_CR>

-ca -o=jsonpath='{.spec.secretName}' | xargs oc delete secret

oc get certificate <name_of_analytics_CR>

-client -o=jsonpath='{.spec.secretName}' | xargs oc delete secret

oc get certificate <name_of_analytics_CR>

-server -o=jsonpath='{.spec.secretName}' | xargs oc delete secret

- Force a restart of all analytics pods by running the following command:

oc delete po -l app.kubernetes.io/instance=<name_of_analytics_CR>

-

Update the DataPower Gateway and API Connect operands:

-

In Platform Navigator, click the Runtimes tab.

-

Click

at the end of the current row, and then click Change version.

at the end of the current row, and then click Change version.

-

Click Select a new channel or version,

and then select

10.0.1.7-eus in the Channel field.

Selecting the new channel ensures that both DataPower Gateway and API Connect are upgraded.

-

Click Save to save your selections and start the upgrade.

In the runtimes table, the Status column for the runtime displays the

"Upgrading" message. The upgrade is complete when the Status is "Ready" and

the Version displays the new version number.

- Upgrade the OpenShift cluster

to 4.10.

- Change the channel in OpenShift to 4.10, and wait for the upgrade to finish.

- Wait for nodes to all show the newer version of Kubernetes.