Refining data (Data Refinery)

Use Data Refinery to cleanse and shape tabular data with a graphical flow editor. You can also use interactive templates to code operations, functions, and logical operators. When you cleanse data, you fix or remove data that is incorrect, incomplete, improperly formatted, or duplicated. When you shape data, you customize it by filtering, sorting, combining or removing columns, and performing operations.

Service The Data Refinery service is not available by default. An administrator must install either the Watson Studio service or the Watson Knowledge Catalog service on the IBM Cloud Pak for Data platform. To determine whether the service is installed, open the Services catalog and check whether the Data Refinery service is enabled.

You create a Data Refinery flow as a set of ordered operations on data. Data Refinery includes a graphical interface to profile your data to validate it and over 20 customizable charts that give you perspective and insights into your data. When you save the refined data set, you typically load it to a different location than where you read it from. In this way, your source data remains untouched by the refinement process.

- Required service

- Watson Studio or Watson Knowledge Catalog

- Data format

- Avro, CSV, JSON, Parquet, TSV (read only), or delimited text data asset

- Tables in relational data sources

- Data size

- Any. Data Refinery operates on a sample subset of rows in the data set. The sample size is 1 MB or 10,000 rows, whichever comes first. However, when you run a job for the Data Refinery flow, the entire data set is processed.

- Prerequisites

- Source file limitations

- Target file limitations

- Refine your data

- Data set previews

- Data Refinery steps

Prerequisites

Before you can refine data, you need a project.

If you have data in cloud or on-premises data sources, you’ll need connections to those sources and you’ll need to add data assets from each connection. If you want to be able to save refined data to cloud or on-premises data sources, create connections for this purpose as well. Source connections can be used only to read data; target connections can be used only to load (save) data. When you create a target connection, be sure to use credentials that have Write permission or you won’t be able to save your Data Refinery flow output to the target.

Source file limitations

CSV files

Be sure that CSV files are correctly formatted and conform to the following rules:

- Files can’t have some rows ending with null values and some columns containing values enclosed in double quotation marks.

- Two consecutive commas in a row indicate an empty column.

- If a row ends with a comma, an additional column is created.

White space characters are considered as part of the data

If your data includes columns that contain white space (blank) characters, Data Refinery considers those white space characters as part of the data, even though you can’t see them in the grid. Be aware that some database tools might pad character strings with white space characters to make all the data in a column the same length and this change will affect the results of Data Refinery operations that compare data.

Column names

Be sure that column names conform to the following rules:

- Duplicate column names are not alllowed. Column names must be unique within the data set. Column names are not case-sensitive. A data set that includes a column name “Sales” and another column name “sales” will not work.

- The column names are not reserved words for R.

- The column names are not numbers. A workaround is to enclose the column names in double quotation marks (“”).

Data sets with columns with the “Other” data type are not supported in Data Refinery flows

If your data set contains columns that have data types that are identified as “Other” in the Watson Studio preview, the columns will show as the String data type in Data Refinery. However, if you try to use the data in a Data Refinery flow, the job for the Data Refinery flow will fail. An example of a data type that shows as “Other” in the preview is the Db2 DECFLOAT data type.

Target file limitations

The following limitations apply if you save Data Refinery flow output (the target data set) to a file:

- You can’t preview the file from the Data Refinery flow details page.

- You can’t change the file format if the file is an existing data asset.

Refine your data

-

Access Data Refinery from within a project. Click Add to project, and then choose Data Refinery flow. Then select the data you want to work with.

Alternatively, from the Assets tab of a project page, you can perform one of the following actions:

- Select Refine from the menu of an Avro, CSV, JSON, Parquet, TSV, or delimited text data asset

- Click an Avro, CSV, JSON, Parquet, TSV, or delimited text data asset to preview it first and then click the Refine link

- If you already have a Data Refinery flow, click New Data Refinery flow from the Data Refinery flows section and then select the data that you want to work with.

Tip: If your data doesn’t display in tabular form, go to the Data tab. Scroll down to the SOURCE FILE information at the bottom of the page. Click the “Specify data format” icon. For more information, see specify the format of your data source.

-

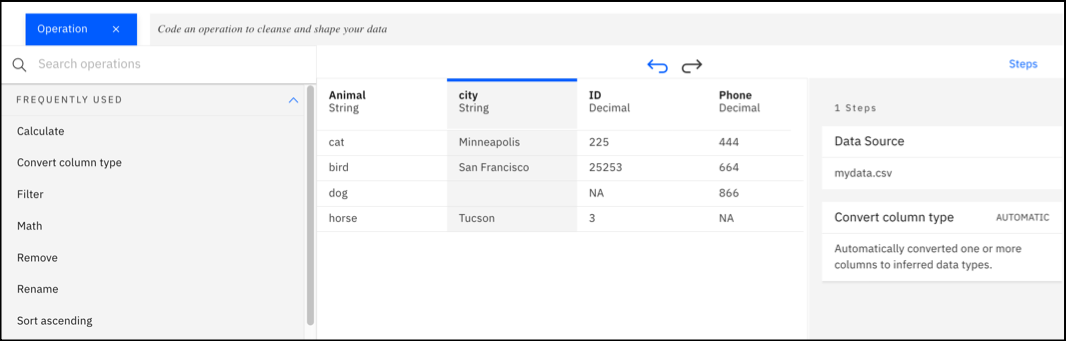

On the Data tab, apply operations to cleanse, shape, and enrich your data. You can enter R code in the command line and let autocomplete assist you in getting the correct syntax. Or you can use the Operations menu to browse operation categories or search for a specific operation, then let the UI guide you.

If your data contains non-string data types, the Convert column type GUI operation is automatically applied as the first step in the Data Refinery flow when you open a file in Data Refinery. Data types are automatically converted to inferred data types, such as Integer, Date, or Boolean. You can undo or edit this step.

The following video shows you how to refine data.

This video provides a visual method as an alternative to following the written steps in this documentation.

-

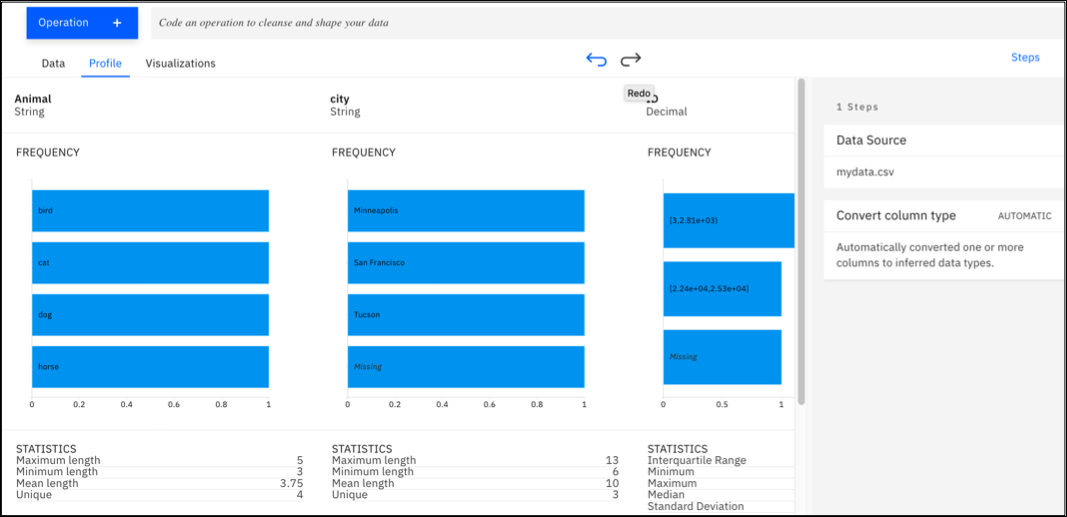

Click the Profile tab to validate your data throughout the data refinement process.

-

Click the Visualizations tab to visualize the data in charts. Uncover patterns, trends, and correlations within your data.

-

Refine the sample data set to suit your needs.

-

Optional: In the Information pane Details tab, click the Edit button to change the Data Refinery flow details and output file information and location.

In the DATA REFINERY FLOW DETAILS pane, click the Edit icon to edit the Data Refinery flow name and description By default, Data Refinery uses the name of the data source to name the Data Refinery flow and the target data set. You can change these names, but you can’t change the project that these data assets belong to.

In the DATA REFINERY FLOW OUTPUT pane, click Edit ouput to edit the target data set’s name description or location. Select whether the first line of the output file contains the column headers. You can save the target data set to the project, to a connection, or to a connected data asset. If you save it to the project, you can save it as a new data asset (default) or you can replace an existing data asset. Edit the location to save the target data set to a connection or to replace an existing data asset or an existing connected data asset. Alternatively, if the location is set to

Data assets, you can edit the name in the Data set name field to specify a data asset as the target. The target data set must be a different data set than the source data set.If you select an existing relational database table or view or you select a connected relational data asset as the target for your Data Refinery flow output, select an option for the existing data set:

- Overwrite - Overwrite the rows in the existing data set with those in the Data Refinery flow output

- Recreate - Delete the rows in the existing data set and replace them with the rows in the Data Refinery flow output

- Insert - Append all rows of the Data Refinery flow output to the existing data set

- Update - Update rows in the existing data set with the Data Refinery flow output; don’t insert any new rows

- Upsert - Update rows in the existing data set and append the rest of the Data Refinery flow output to it

For the Update and Upsert options, you’ll need to select the columns in the output data set to compare to columns in the existing data set. The output and target data sets must have the same number of columns, and the columns must have the same names and data types in both data sets.

If you select a file in a connection as the target for your Data Refinery flow output, you can select one of the following formats for that file:

- Avro

- CSV

- JSON

- Parquet

-

Click Save and create a job or Save and view jobs in the toolbar to run the Data Refinery flow on the entire data set. Select the runtime and add a one-time or repeating schedule. For information about jobs, see Jobs in a project.

Tip: If you want to continue refining your data later, open the Data Refinery flow from the project’s Assets tab > Data Refinery flows section and pick up from where you left off.

Data set previews

Data Refinery provides support for large data sets, which can be time-consuming and unwieldy to refine. To enable you to work quickly and efficiently, it operates on a subset of rows in the data set while you interactively refine the data. When you run a job for the Data Refinery flow, it operates on the entire data set.

Data Refinery steps

As you apply operations to a data set, Data Refinery keeps track of them and builds a Data Refinery flow. For each operation that you apply, Data Refinery adds a step in the Steps tab.

To see what your data looked like at any point in time, click a previous step to put Data Refinery into snapshot view. For example, if you click the data source step, you’ll see what your data looked like before you started refining it. Click any operation step to see what your data looked like after that operation was applied. To leave snapshot mode, click SNAPSHOT VIEW or toggle it off by clicking the same step that you selected to get into snapshot view.

If you want to change the source of the Data Refinery flow, click the Edit icon next to Data Source (before the first step). For best results, the new data set should have a schema that is compatible to the original data set (for example, column names, number of columns, and data types). If the new data set has a different schema, operations that won’t work with the schema will show errors. You can edit or delete the operations, or change the source to one that has a more compatible schema.

You can undo and redo operations from the toolbar. You can also insert, edit, and delete operations from the Steps tab.

To insert an operation between two steps:

- Click the step before the position where you want to insert the new operation. Data Refinery shows you a snapshot view of the data set after that operation was applied.

- Select and apply the new operation. Data Refinery inserts a new step between the existing steps, and it reruns all of the operations that follow the new step.

To edit an operation:

- Click the Edit icon on the step for the operation you want to edit. Data Refinery goes into edit mode and either displays the operation to be edited on the command line or in the Operation pane.

- Edit the operation or select a different operation to take its place.

- Apply the edited operation. Data Refinery updates the relevant step to reflect your changes and it reruns all of the operations that follow the edited one.