IBM Systems Research: AI and Hybrid Cloud Can Advance Only as Far as Hardware Can Take Them

Systems research advances the foundational technology needed to support the AI and hybrid cloud technologies driving IBM’s strategic business goals.

Systems research advances the foundational technology needed to support the AI and hybrid cloud technologies driving IBM’s strategic business goals.

IBM has a long and storied history as a pioneer of innovative hardware systems, including mainframes, PCs, storage devices and, now, quantum computers. Throughout its 75-year history, IBM Research has played a crucial role in advancing the company’s Systems technology, inventing breakthrough hardware components including Dynamic Random-Access Memory (DRAM), copper semiconductor interconnects and the hard disk drive, to name a few. That work continues, as Systems research advances the foundational technology needed to support the artificial intelligence (AI) and hybrid cloud technologies driving IBM’s strategic business goals.

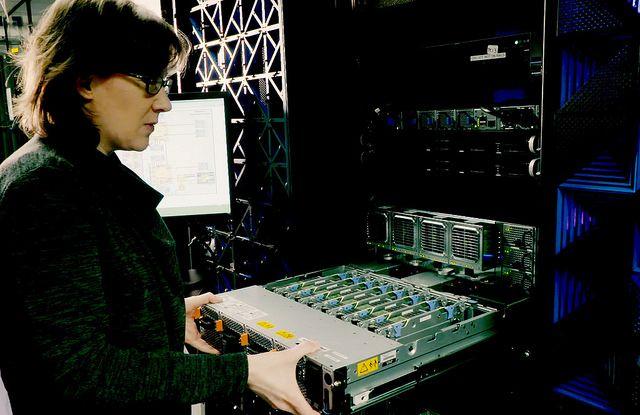

One of IBM Research’s top priorities is developing heterogeneous systems that make advanced classical, AI and quantum capabilities available through the hybrid cloud. Improving hybrid cloud performance, development speed and security in parallel requires innovation across the entire stack. As a result, we are improving hybrid cloud infrastructures through device, design and architecture innovations to drive performance as well as enhance AI capabilities.

Systems research will create entirely new computing capabilities for future generations of IBM Systems AI hardware and composable, purpose-built systems to support the enterprise workloads of tomorrow.

IBM Research is building systems optimized for AI that help accelerate developments on the software and applications side. AI that performs increasingly complex tasks in computer vision, natural language processing (NLP) and speech transcription and synthesis has improved by leaps and bounds in recent years. The machine-learning algorithms and neural networks that enable those complex tasks require continuous advances in software, algorithms and hardware — in particular, highly parallelized hardware equipped to handle the matrix multiplication operations of deep learning.

Our goal is to push 2.5x power and performance improvements each year for the next decade for AI workloads. It’s become clear, however, that such advances are quickly stretching the limits of the current generation of computer hardware. Maintaining the cadence of advancing AI requires breakthroughs in performance and efficiency that support complex, real-world applications that can take advantage of multiple AI models and AI operations, extending beyond existing AI designed for a single task, such as image classification.

Such versatile, multipurpose AI places large demands on compute that cannot be achieved without innovation — a reality that motivated the creation of the IBM Research AI Hardware Center. The Center plays a central role in the development of AI hardware that can be used to learn and reason, apply cause-effect understanding of data, and integrate information from multiple formats and domains. Launched in 2019, the Center serves as the nucleus of a new ecosystem of research and commercial partners collaborating with IBM researchers to further accelerate the development of AI-optimized hardware advances.

AI Systems research examples:

- Analog AI cores: IBM Research has advanced the state-of-the-art in phase-change memory (PCM) that can be used to execute code faster and at a lower cost. Due to its analog nature, PCM is less accurate than digital technology, but it is much more energy efficient than digital technology, an approach that is critical to improving AI. In a May 2020 Nature Communications study , for example, IBM Research – Europe researchers described a technique that achieves both energy efficiency and high accuracy on machine learning workloads using PCM.1 By exploiting in-memory computing methods using resistance-based storage devices, their approach marries the compartments used to store and compute data, in the process significantly cutting down on active power consumption.

- Digital AI cores: IBM Research is also a global leader in approximate computing architecture and software techniques for deep learning with our Digital AI Cores. At the June 2020 VLSI conference we are presenting research about a new 14-nanometer (nm) processor core for both AI training and inference. The research highlighted innovations such as heterogeneous compute engines with architectural programmability and dataflow mappings configured to support a broad set of AI workloads. Combined with a software-controlled network interface, this resulted in improvements in hardware utilization, which led to notable improvements in training area efficiency and training compute efficiency.

For more than a decade, Systems research has played an important role in the development of IBM’s Z system mainframe technology, a key component in the company’s hybrid cloud strategy, particularly in the area of security. IBM Research co-led the development of IBM Z hybrid cloud services and zOS container extensions, enabling zOS applications to modernize in place and integrating existing workloads with Linux applications.

Hybrid cloud Systems research examples:

- 7nm microprocessor: IBM has been working with Samsung to manufacture 7-nm microprocessors for IBM Power Systems™, IBM Z™ and LinuxONE, high-performance computing (HPC) systems and cloud offerings. The agreement combines IBM’s high-performance CPU designs with Samsung’s industry-leading semiconductor manufacturing.

- Security: Robust security for IBM Systems is also a priority. Systems researchers developed Z Crypto Card , which enables enterprises to store encryption keys and have the right level of certification. In addition, IBM Research helped provide the z15’s Data Privacy Passports secure data controls, storage protocols and hardware-encrypted protection. These tools enable clients to protect data by revoking access to that data at any time from any location.

- Cloud native storage with automatic cyber resiliency: Project MachineCity, a new generation of Kubernetes-based cloud native storage, delivers a new storage consumption model with built-in automatic logical and physical data protection that advises users the best recovery option to ensure cyber-resiliency. With the recovery advisor function, a storage user will no longer need to determine the complicated data recovery options at the time of a cyber-attack and yet will receive best recovery time and recovery point objectives options.

- Storage hardware and software: Reliable yet cost-effective storage is critical to hold the vast quantities of data collected in clouds and fuel the brains of AI engines. IBM Systems researchers are enabling the first enterprise-grade all-flash storage systems based on the latest generation of Quad-level cell (Qlc) flash technology, without compromises in performance or reliability. Furthermore, they are recreating tape-based storage to the needs of cloud environments to hold massive archives of data while dramatically lowering their cost and environmental footprint.

- Quantum safe encryption: Next-generation encryption is also an important area of research, as it’s likely that quantum computers will eventually be able to bypass even the most secure encryption. Our team at IBM Research – Zurich has developed the world’s first quantum-safe tape technology, and is continuing to expand this concept to other systems components.

Beyond these immediate advances, Systems research also extends to longer-term projects intended to alter the hardware landscape, from supercomputers down to the processors that power them. In addition to producing key technology breakthroughs for DRAM and copper semiconductor interconnects , fundamental research at IBM has led to innovative inventions including magnetic storage and the Reduced Instruction Set Computer (RISC) architecture, as well as the Deep Blue and Watson hardware platforms.

It’s important to investigate a wide variety of areas to improve systems performance, ranging from new transistor design to memory materials, the transfer and storage of information at the atomic level and the application of quantum mechanics to computing. Pushing the boundaries of science is how IBM Research pushes the development of technologies underlying AI and hybrid cloud.

Fundamental Systems research examples:

- Nanosheet semiconductor transistor architecture: Nanosheet architecture is a promising new approach to transistor design that improves performance over the existing FinFET architecture while reducing power consumption and cost. IBM Research published the first nanosheet paper in 2015, coining the term, and continues to work closely with partners to accelerate the industry transition to this new design. In 2017 , IBM and research partner Samsung announced an industry-first process to build silicon nanosheet transistors that will enable 5nm chips . Nanosheet has since been widely adopted, including Samsung’s 2019 announcement to bring this technology to manufacturing. We continue to innovate around the nanosheet architecture and will share results of a new feature for this architecture, “air spacer”, at VLSI this month. Nanosheet’s technology features make it a superior device architecture for both mobile and high-performance computing systems.

- Magnetic memory (MRAM) materials and technology: In the end, you still need to store and process information. As part of Research’s ongoing investigation into new and improved approaches to non-volatile memory devices, IBM scientists have developed several different types of magnetoresistive random-access memory, or MRAM, which uses the spin of an electron to store bits. The first generation of MRAM, called field-switched MRAM, was written by applying large currents to generate magnetic fields to switch the second magnet between north and south. The most recent version is Spin Transfer Torque MRAM (STT-MRAM), which IBM Researchers aim to improve until it is dense and fast enough to be used as a cache memory in IBM’s servers.2

Systems research is crucial to support rapidly diversifying computing platforms that use bits, neurons and qubits to enable enterprise to conduct their business across a tapestry of cloud computing and on-prem networks. IBM Research will continue to investigate new ways to improve the company’s full systems approach – encompassing hardware, software and semiconductors – which is needed for IBM to lead in the AI and hybrid cloud era.

References

-

Joshi, V. et al. Accurate deep neural network inference using computational phase-change memory. Nat Commun 11, 2473 (2020). ↩

-

Hu, G. et al. Spin-transfer torque MRAM with reliable 2 ns writing for last level cache applications. in 2019 IEEE International Electron Devices Meeting (IEDM) 2.6.1-2.6.4 (2019). ↩